Google's Lyria 3 AI Music Model Just Landed in Gemini

Henry Wadsworth Longfellow once described music as "the universal language of mankind." Google is now testing whether that definition still holds when the composer is a neural network. With the rollout of Lyria 3 inside the Gemini app, the company is bringing AI music generation to a mainstream audience for the first time — no musical background, no lyrics, no real effort required. It's a meaningful step forward in a space that has been heating up fast, with competitors like Suno, Udio, and Meta's MusicGen all vying for position in the emerging AI audio market.

Lyria 3 arrives in Gemini with a simpler, faster workflow

Google DeepMind has been developing the Lyria model family for some time, but previous iterations were largely confined to developer-facing tools like Vertex AI — useful for builders, invisible to most users. Lyria 3 changes that calculus. The model is now accessible directly through the Gemini app and web interface via a dedicated "Create music" option, putting it in front of the same broad consumer base that uses Gemini for writing, coding, and image tasks.

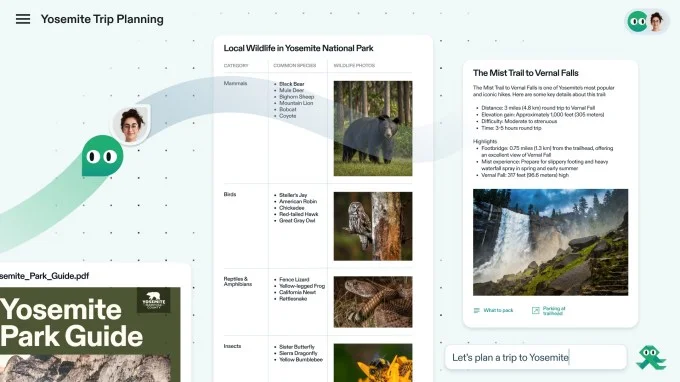

The generation process is deliberately low-friction. Users describe what they want in plain language, and optionally upload an image to help set the tone. Within seconds, the model produces a track. Lyria 3 is also notably faster than its predecessors, which matters when the target audience has no patience for a slow creative tool.

That image-to-music pathway is worth highlighting on its own. The ability to feed a photograph — a sunset, a city street, a portrait — and receive a corresponding audio mood is a genuinely novel interaction model. It suggests Google is thinking about Lyria 3 not just as a text tool but as a multimodal creative layer that can sit on top of other media. For content creators who work across formats, that kind of cross-modal generation could become a practical part of their workflow rather than a novelty.

No lyrics needed — the model fills in the blanks

The most telling feature of Lyria 3 isn't the speed or the image input — it's the removal of the lyrics requirement. Earlier versions of the model expected users to supply at least some textual content for the song. Lyria 3 drops that expectation entirely. A vague prompt is enough; the model will generate appropriate lyrics on its own and wrap them into a 30-second composition.

That 30-second ceiling is worth sitting with. It's long enough to establish a mood or a hook, but short enough that "jingle" is probably the more honest descriptor. Whether that constraint is a technical limitation or a deliberate product decision isn't clear, but it shapes what Lyria 3 actually is in practice — a tool for quick, disposable audio content rather than anything resembling a full song.

The auto-generated lyrics piece also raises questions that go beyond convenience. When a model writes words on a user's behalf without any human input, the authorship question becomes genuinely murky. Who owns that output? Current copyright frameworks in most jurisdictions are still catching up to AI-generated text, let alone AI-generated song lyrics embedded inside AI-generated music. Google has been relatively quiet on the specifics of how it handles ownership and licensing for Lyria 3 outputs, and that silence will likely attract scrutiny as the tool scales.

How Lyria 3 fits into the broader AI audio landscape

Google's decision to embed Lyria 3 directly into Gemini — rather than keeping it tucked inside a developer API — signals a shift in how the company views AI music generation: less as an experimental capability and more as a standard feature of a general-purpose assistant. The same pattern played out with image generation, which moved from research demos to consumer products over a relatively short window.

The competitive context matters here. Suno and Udio have built dedicated products around AI music generation and have attracted millions of users, but they also face active litigation from major record labels over training data. Google, with its deeper legal resources and existing licensing relationships with rights holders, may be better positioned to navigate that minefield — though it isn't immune to it. The Recording Industry Association of America has made clear it intends to pursue AI music companies aggressively, and a Google product with mainstream reach will draw attention regardless of how carefully it was built.

Meanwhile, the use cases for short-form AI audio are expanding quickly. Social media content, podcast intros, YouTube background tracks, app sound design, and advertising all represent real demand for fast, cheap audio that doesn't require licensing negotiations. Lyria 3's 30-second format maps neatly onto many of those needs, which suggests Google has a clear commercial thesis even if it isn't spelling it out explicitly.

Implications for creators, the music industry, and what comes next

The arrival of Lyria 3 in a consumer product used by hundreds of millions of people is not just a product update — it's a signal about where the creative economy is heading. For independent musicians and composers, tools like this represent both an opportunity and a threat. On one hand, AI-assisted music generation could lower the barrier to entry for people who have musical ideas but lack technical training. On the other, it puts a free, fast alternative in the hands of anyone who might otherwise have hired a composer for a small project.

The professional music industry has been watching this space with a mixture of fascination and alarm. Several high-profile artists have spoken out against AI music tools, arguing that they devalue human creativity and are trained on copyrighted work without consent. Those concerns aren't going away, and as Google's tool becomes more visible, it will likely become a focal point for that debate in a way that smaller startups haven't been.

There's also a longer arc to consider. Lyria 3 today produces 30-second clips. The version two or three years from now, trained on more data and running on better infrastructure, will almost certainly produce longer, more sophisticated compositions. The current limitations are a snapshot of where the technology is, not a ceiling on where it's going. That trajectory is what the music industry is really reacting to — not the jingle, but the implication of the jingle.

The philosophical question Longfellow's quote raises is real, even if it sounds rhetorical. Music generated without human intent, without lived experience, and without even a lyric supplied by the person requesting it occupies genuinely ambiguous creative territory. Google isn't pretending otherwise — the product is framed as generation, not composition. But as these tools become easier to access and harder to distinguish from human-made work, the industry will keep bumping into that ambiguity whether it wants to or not.

For now, Lyria 3 represents the most accessible version of Google's AI music work to date. It's a 30-second window into where this technology is headed — and a reminder that the most consequential shifts in creative tools rarely announce themselves loudly. They arrive as a simple button in an app you already use, and by the time the implications are fully understood, the behavior has already changed.