Google's Project Genie Turns a Single Photo or Text Prompt Into a Playable Interactive World

Google's ambitions in generative AI have quietly extended well beyond chatbots and image tools — and Project Genie is the clearest sign yet of where that ambition is pointing. The company has opened up its AI world model technology to a broader audience, though with a catch: access requires a subscription to Google's most premium AI tier. It's a move that says a lot about how Google views this technology — not as a curiosity to be given away freely, but as a flagship capability worth paying for. And depending on how the technology matures, that bet could either look prescient or premature.

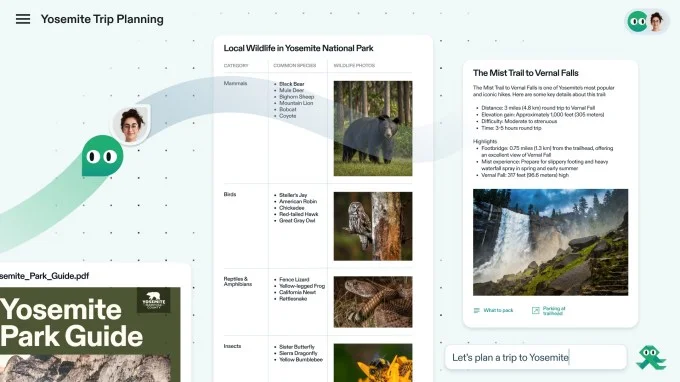

What Project Genie actually does

World models occupy a distinct corner of the AI space. Rather than generating a static image or a pre-rendered scene, they produce a dynamic, interactive video environment that responds to user inputs in real time. The result feels like navigating a virtual world, even though the underlying output is technically a video stream being generated on the fly. Think of it less like a game engine and more like a model that has learned the visual and physical logic of environments well enough to improvise them continuously — a fundamentally different challenge than generating a single coherent image.

Project Genie builds on Genie 3, the version Google unveiled last year, which stood out for its significantly improved long-term memory. Previous world models struggled to maintain coherent environments beyond a few seconds — objects would shift, lighting would drift, and spatial logic would quietly fall apart. Genie 3 pushed that window to a couple of minutes — modest by human standards, but a meaningful leap for the technology. Project Genie is essentially a polished, consumer-facing version of that system, now integrated with newer models including Nano Banana Pro and Gemini 3. The integration with Gemini in particular suggests Google is treating world generation not as a standalone feature but as part of a broader multimodal stack.

Users can generate environments either by uploading a reference image or by describing what they want through a text prompt — specifying the setting, the character, the mood. The system then constructs an interactive environment around those inputs, allowing the user to move through and interact with what it generates. Google also ships a set of pre-built worlds for those who want to jump straight in without building from scratch, which serves as both an onboarding tool and a showcase of what the system looks like when it's working at its best.

Why the paywall matters for AI adoption

Gating Project Genie behind Google's most expensive AI subscription is a deliberate signal. When Google first introduced Genie 3, access was limited to a small circle of trusted testers — a standard approach for experimental technology that isn't quite ready for general use. Widening availability now, but only to paying subscribers at the top tier, suggests the company sees this as a premium differentiator rather than a broadly accessible tool. It's a similar playbook to how early access to advanced Gemini features has been handled — drip the capability to high-value users first, gather signal, then decide how far to open the gates.

That positioning has real implications. World model technology is still early, and the memory limitation — a coherent environment lasting only a few minutes — means the experience is impressive as a demonstration but constrained as a practical creative tool. Tying it to a high-cost subscription means the people most likely to stress-test its limits are enthusiasts and professionals who are already invested in Google's ecosystem. That's a useful feedback loop for a technology that still has significant ground to cover. It also filters out casual users who might bounce after a single session, skewing the usage data toward people with genuine creative or technical intent.

It also puts Google in direct conversation with other companies exploring interactive AI environments, where the race isn't just about image quality or response speed, but about how long and how consistently a generated world can hold together under user interaction. Startups and research labs have been circling this space for a while, but Google's scale — both in compute and in distribution — gives it a structural advantage if the underlying models keep improving at their current pace.

The gap between the demo and the experience

There's a recurring pattern with world model announcements: the showcase footage looks fluid and convincing, but the underlying constraints become apparent once users get hands-on time. A two-minute coherent memory window is genuinely better than what came before, but it also means the system can lose track of details in an environment that a user might expect to persist. An object placed in a corner might drift. A character's appearance might subtly shift. The spatial logic that felt solid a minute ago starts to fray. For casual exploration that may not matter much. For anyone trying to build something with narrative continuity or consistent world logic, it's a real limitation that no amount of polished UI can paper over.

Google's decision to ship pre-built worlds alongside the generative tools is a smart hedge — it gives users a stable, polished experience while the more experimental creation features mature. It also sets a quality bar that the generative side of the product has to work toward, which is useful internal pressure. Whether the technology develops fast enough to justify the subscription cost for most users will depend on how quickly those memory and coherence limits get pushed further out.

What this means for the broader AI landscape

Project Genie's arrival as a consumer product — even a gated one — marks a shift in how world model research translates into real products. For years, this work lived almost entirely in academic papers and controlled demos. The fact that Google is now shipping it, attaching a price to it, and integrating it with its broader model ecosystem signals that the technology has crossed some internal threshold of readiness. That doesn't mean it's finished — far from it — but it does mean the feedback loop between research and real-world use is about to get a lot tighter.

For the AI industry more broadly, the implications are significant. If world models become a standard capability that major AI platforms offer, the downstream effects on game development, simulation, training data generation, and interactive storytelling could be substantial. Developers who currently rely on traditional game engines to build environments might eventually have an alternative that requires no assets, no scripting, and no rendering pipeline — just a prompt and a model that knows how the world is supposed to behave. That future is still some distance away, but Project Genie is one of the more concrete steps toward it that any company has taken publicly.

The subscription model also raises a longer-term question about access and equity in AI tooling. If the most capable generative tools continue to sit behind high-cost tiers, the gap between well-resourced creators and everyone else widens. That's not unique to Google — it's a pattern playing out across the industry — but it's worth naming as these capabilities move from research curiosities to genuine creative infrastructure.

Project Genie is not yet the tool that changes how people build interactive experiences. But it's a credible, tangible step in that direction — and the speed at which Google is moving from research paper to consumer product suggests the gap between where the technology is now and where it needs to be is closing faster than the cautious framing around memory limits might imply. The next few iterations will be worth watching closely.